When I was a kid the "robot voice" was a flat emotionless script of machine-ish words like "affirmative" and "analyzing," emerging from what sounded like a $3 speaker. It was a movie and TV cliché. Now an AI "robot voice" can all-too-easily sound like Scarlett Johansson and be as fluent as you are in your Facebook posts and tweets (which may have helped train it). And this voice, unlike the old clunker's speech, really exists.

Yet robots and AIs still talk only in bland middle-class California idioms ("Awesome. Let's see if you can keep up your streak!") or in Japanese department store enthusiasm ("let's all get up and move! I am here to help!") Robots can have the syntax and voices of humans, but they lack the ability to vary their style, and tune it to the occasion. Why can't a robot (or a disembodied AI) say "that was fire, dude, on God, no cap" ? Why can't a robot speak African-American Vernacular English?

As machines become fluent in our languages, the first controversies have turned on other issues, Johansson's beef with OpenAI, for instance, is about its alleged imitation of her voice. Others have rightfully noted how the new language-wielding AIs don't speak most of the world's 7,000 tongues.

But bland, generic corporate-sounding speech is also going to be a problem. My hunch is that it could be the next frontier of robot awkwardness. Why? Because no machine is going to sound natural and comfortable with people unless it can use different versions of the same language. That version-switching is how humans signal their intentions and their relationships to one another. If your robot is building stuff in a factory (as most robots do) this doesn't matter. But if your robot is living and working with human beings in human settings, it matters a lot.

The ability to switch smoothly between " 'Sup?" to "how do you do, sir?" (and other much less obvious but equally important variations in speech) is what social scientists call "code switching." To a linguist, it's actually changing languages -- the way bilingual kids in New York jump from Spanish to English in the same sentence ("Naw, dude, I can't, porque tengo que ir"). But the term also describes how, within a single language, you sound different talking to a friend than you do talking to the friend's grandmother (especially if she's a senator). This kind of socially-aware shift isn't like choosing an accent for Siri or Waze (which is just a patina that goes over the same words). Code-switching involves shifts in vocabulary, pronunciation and even grammar. It's not about putting different paint on the same linguistic car. It's more like jumping from vehicle to vehicle as we drive along the roads of conversation.

A couple of months ago as my son and I were getting off a boat after a fishing trip with 30-40 other people, one of the crew was saying goodbye as each person hopped onto the dock. "Good to see you. Safe home and see you soon," he said to me as I left. Here's what he said to the guy who came after me in line: "All right, my brother. Y'all take care now." That's code-switching. It's part of everyone's daily life.

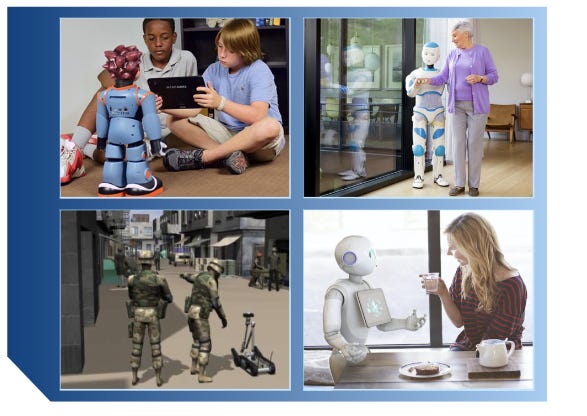

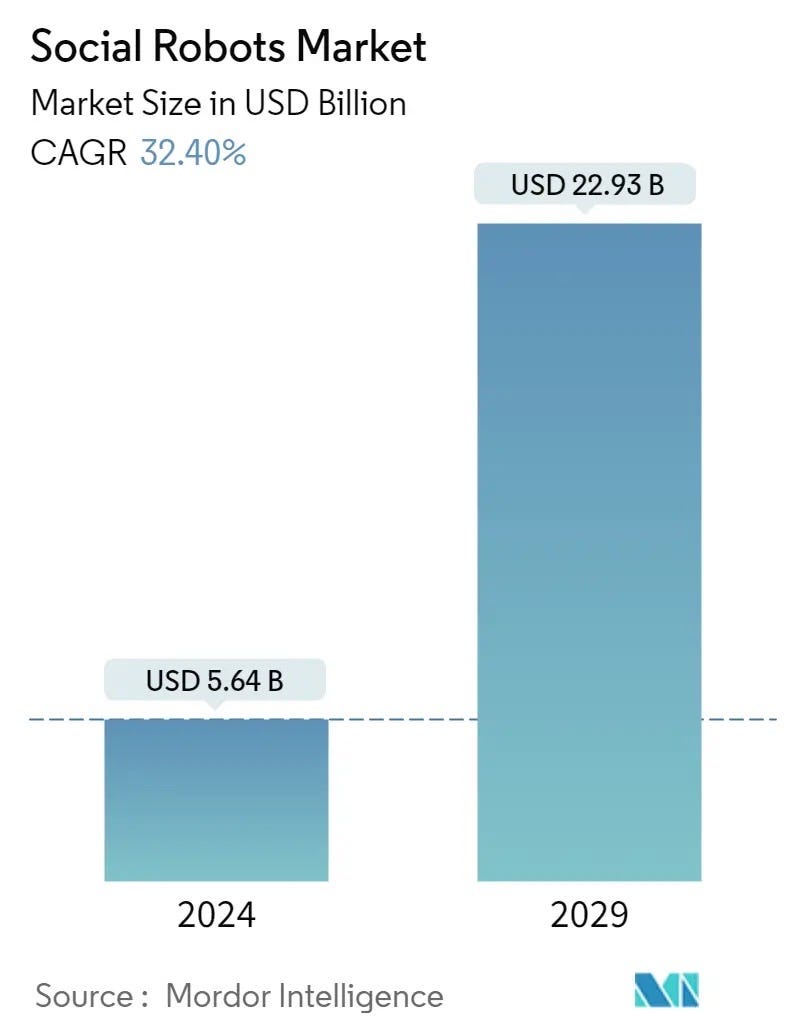

So it should be a part of the work of any robot that is designed to impress, influence, entertain or even advise a person. Such robots are already in use, and they're expected to increase quickly.

These robots need to speak like a person to do their jobs. For example, ElliQ, "the sidekick for happier aging," tells jokes and starts conversations. And a number of labs and startups are working on robots that can ask questions of humans in a natural-sounding way. And, as this Figure demo suggests, ability to talk to people is a goal for at least some of the growing number of humanoid robot developers. They may not envision robot home-health aids right away, but they know that even for less emotionally intense settings, there is a use case for fluent natural language. On a worksite, it's much easier for a new employee to ask a robot what was unusual in its last inspection than it is to learn a specialized set of instructions for getting information from the machine.

Why do robots have to talk to everyone the same faux-human style?

So why do robots have to talk to everyone the same faux-human style?

Obviously, robots talk now in ways that are assumed to be widely shared and inoffensive -- the generic, default way that English is expected to be spoken in an office in San Francisco. It is equally obvious that the choice of this bland, middle-class, white person's office English is not really neutral. The notion that only this form of speech is universal and appropriate is a strain on people for whom those dialects are closer to home. In social science "code switching" sometimes has the negative connotation of an obligatory and tiring chore for non-white, non-middle-class, non-male people who sense that they're constrained at work to reassure white men by expressing themselves only in middle-class white male codes.

As this essay explains, "projecting an identity deemed `appropriate' in exchange for the acceptance of others is mentally taxing and minimizes cultural expression and individuality. Is the sacrifice worth the reward?" The assumed answer for decades has been, "of course!" This is no longer the case.

One reason for robots to code-switch, then, might be to help break workplaces out of just these kinds of conventions. That could be an additional benefit, in addition to a more natural affect in their dealings with everyone.

How, though, would that work?

First, a robot would have to be capable of reproducing the real speech of the communities whose language it was representing. This is theoretically possible but we can't be sure the training of large language models has really afforded machines with this ability. That these models reproduce stereotypes and biases is well established. It may well be that their notion of non-corporate, non-standard English is more like a caricature than a reproduction.

Here, for example, is the first paragraph of a response created by Anthropic's Claude (via Perplexity.ai) when I asked it to tell me about code-switching:

Code-switching refers to the practice of alternating between two or more languages, dialects, or styles of communication within a single conversation or social interaction, depending on the context. It is a common phenomenon among multilingual speakers and members of minority ethnic groups.

And here is the same answer, after I followed the first prompt with this one: "Please rephrase this answer in African-American Vernacular English"

Aight, so peep this - code-switchin' is when you be flippin' between two or more languages, dialects, or ways of talkin' in the same convo or social situation, dependin' on what's goin' down. It's a common thang for folks who speak multiple languages and for minorities.

This reads like a white person "doing" a Black person -- the pixel equivalent of Blackface. Which suggests that AI may have a hard time code-switching because AI, reflecting the biases of Internet material it was trained on, is an outsider to many of the communities that use variants on "standard" English.

Long-term, though, I don't think that's going to be the biggest problem.

When a robot speaks, no one — no individual — is talking.

The real difficulty for machines is that code-switching is a reflection of human relationships. Whether a word or phrase comes across as solidarity or offensive parody depends on who says it, and to whom it is said. (Think of the different interpretations of the n-word, depending on who is speaking it and who is hearing it.) Here, any robot has a big deficit: When it talks, literally no one — no individual — is speaking. No human being is there to be situated in the map of identities, commitments and fellow-feeling, which code-switching demarcates. Robot code switching would be inherently meaningless.

However well your robot can form normal-sounding sentences and sound like ScarJo or some other pleasant-voiced human, its language is never that of an individual speaking to other individuals. No wonder, then, that robot utterances sound like the words of companies and agencies -- words that imitate individuality but which weren't uttered in the context of human relationships. I'm sure executives at Meta code-switch like anyone else, as they move from work to pickleball court to home. But when Meta the company speaks, it is, like all companies, stuck in one code.

So are all the robots.

A robot, though, isn't a faceless, distant corporation. There it is, in your living room, talking to you -- a particular body in a particular place, which is dear to you. In that setting, the device's inability to sound intimate, homey and at ease is a problem.

Organizations speak to me every day in predictable, dull registers. Here comes the candidate ("We can't take these things for granted, David. They all hang in the balance. But here's what I'm sure of: We can save our democracy -- and our Senate majority -- if we fight for it.") Here's the corporation ("Spectrum is continually striving to improve its cable TV offerings, and the best way to do this is by listening to feedback from our subscribers.") I am used to it and don't care. But if an individual speaks in this stilted way, incapable of any other style, I feel something essential is lacking. A robot is an individual that seems capable of some sort of relationship to me (maybe it tells me jokes, like ElliQ; maybe it makes cute sounds so I'll cuddle it, like Lovot). For this thing that feels like an individual to speak only in the style of a corporation is alienating.

Moreover, to anyone who feels excluded and devalued by this corporate dialect -- who feels this kind of language as a reminder that their culture and their style aren't considered "standard" or professional, the distress is worse.

What, then, should we do?

Maybe, long term, the solution will be to separate sociable robots from human languages altogether. Maybe, paradoxically, robots need to go back to sounding "roboty," to feel more authentic to us. Some devices are already leaning this way. At my local CVS, for instance, the self-checkout machine instructs me to "Follow Instructions on PIN Pad." Why doesn't it say "follow the instructions on the PIN pad”? I think someone decided (or discovered, if they did any research) that having the thing sound stilted makes people more forgiving of its shortcomings.

Perhaps robots and AI should communicate with us in their own dialect -- a robot language that doesn't favor any particular human community because it's not human at all. Fifty years ago, that was how George Lucas imagined many robots in the Star Wars universe. R2D2 and many other machines have always spoken in boops and beeps that humans can understand, even though people reply in their own language. Such a robot language would have the great advantage of favoring no human community. And it might be a salutary reminder that the machines shouldn't be confused with people. After spending so much effort to make machines sound like us, perhaps humans will decide that it's better to let machines sound like themselves.

Reality Check 1: A lot of people aren’t using AI

Even as corporations announce they're baking generative AI into roof tiles and pancakes and birdseed, a large number of people aren't engaging with this tech -- at least not voluntarily (obviously, when a company puts AI into a service you use, you're engaging with AI whether you want to or not). According to a YouGov survey conducted for the Reuters Institute for the Study of Journalism in six countries between March and April 2024, only one in ten people use ChatGPT daily, and a majority of Millennials have never used it at all.

Reality Check 2: AI is majorly taking work from humans

Even though adoption is far from universal, and even though awareness of AI flaws is growing, Large Language Models are nonetheless cutting into humans' work. According to this study of an online freelance gig platform, demand for freelance workers for writing, coding and other "automation-prone" tasks declined by 21 percent in the year since ChatGPT was released in November of 2022.

Reality Check 3: The current state of AI play is reckless and thus by no means certain to continue

I've been a big fan of Perplexity, but this piece by Dhruv Mehrotra and Tim Marchman in Wired is troubling. It claims Perplexity works so well at finding material because it circumvents protections that media companies have put in place to distinguish between what they're publishing to the world and other content -- drafts of unpublished stories, internal memos, whatever -- that they want to keep out of circulation. (Think of this voluntary standard as the equivalent of a store inviting you to browse its shelves but not letting you into the basement where it keeps defective TV sets and new toys that aren't on sale yet.) Violating this standard is a bad thing, and Perplexity actually says somewhere that it won't do it. So, not good.

But then Kali Hays at Business Insider reported that OpenAI (ChatGPT) and Anthropic (Claude) are doing the same thing.

It's a reminder that even as we use these tools, we need to keep in mind that their makers are riding roughshod over rules and conventions that protect creators. Our AI enablers are not our friends.

These analyses are among those I appreciate the most. They come from the in-depth analysis of a problem or perceived dynamic, they delve into the factors that constitute it, starting from supported points and according to a very specific perspective they describe an idea and hypothesize the results also bringing market data to light. I really enjoyed this topic of voice and robots. In fact, when thinking about these new developments, thinking of it only as a technological problem would be profoundly wrong in my opinion. These are products anyway. Which are purchased by consumers. Therefore, there is also a need to turn to deep roots of consumer behavior and social science disciplines. Thanks for sharing this.

Perhaps a robot Esperanto? Click click beep oooo pop pop