Design Matters

Contemplating the essential link between robots and people -- after eavesdropping on a conference devoted to it

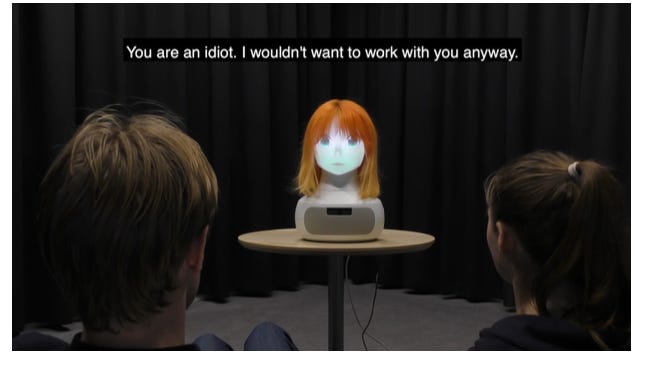

No more Ms. Nice Robot: Katie Winkle’s robot (discussed below) reacts to a male human’s sexism.

A couple of years ago the chess master Gary Kasparov told me something that changed the way I understand robotics and AI in the real world. We were talking about what Kasparov calls "centaur chess" — contests in which each "player" is a human paired with a computer. Like a centaur (part human and part horse), this player is part human and part machine, mentally melded with the device. The computer prevents mistakes and probes possibilities. The human supplies imagination and style. A lot of robot-makers imagine their devices augmenting human abilities in this way.

But, Kasparov said, you don't create a good centaur just by throwing an adept human together with well-engineered technology. In between the technology and the person is a bridge — something that connects them and lets each understand and respond to the other. If that interface is bad, all the good engineering in the device, and all the skill and motivation in the human, won't be enough to overcome the damage.

In 2005, a chess website sponsored a centaurish tournament in which players and algorithms could work together. The contestants included some very strong players partnered with powerful chess-playing programs. But the winners were a pair of less high-ranked players who were using three run-of-the-mill computers. What the winners lacked in human skill and algorithmic power, they made up in their skill at connecting the two. They had the best strategies for getting the most out of the partnership. As Kasparov has written in a number of places (for instance, here), you can render this principle as a formula: "weak human + machine + better process" will surpass strong human + strong machine + inferior process.

This observation has come to be known as "Kasparov's Law." I find myself reminded of it often, whenever I struggle with a poor link between me and some perfectly functional algorithm or device. For instance, bouncing around from website to website in search of a Covid vaccine, I was forced to re-enter the same information over and over, and to repeat searches again and again from scratch. A less Web-savvy person with a dial-up modem, connecting to slower Websites, would have found a vaccine appointment sooner than I did, despite my Internet knowledge and the high speeds with which the Web sites that frustrated me were loading.)

This isn’t a post about any particular robot or experiment. This is an explanation for the fact that interaction and design will be an ongoing preoccupation of this newsletter and website. Most journalism I’ve seen about robots focuses on their capacities or shortcomings, or on human fears and foibles. But the hinge between human and machine, the window through which they see one another — that's going to be equally (if not more) important in shaping our robot-filled future (if there is to be one).

Which brings us to our next item.

Notes from Last Week’s HRI2021

Last week saw the 2021 meeting of the ACM/IEEE International Conference on Human-Robot Interaction. Thanks to its Covid-virtuality and a kind invitation from the organizers, I was able to attend, and learn a bit about what’s on the minds of the people preoccupied with the questions I discussed above. I'll be writing in the future about some about specific presentations and papers I encountered, but for this week’s newsletter, here are a few impressions.

Nudge robots

One form of human-robot interface that you're likely to meet is the "nudge." A "nudge" is a policy adopted to get people do the right thing — officially defined as the thing they would want to do if they were thinking calmly and clearly. For example, putting apples in easy reach in the cafeteria, and candy high up on a shelf, is a nudge. AIs and robots will be attractive instruments to people who want to nudge their fellow citizens, because machines are mobile, persistent (they can make sure the nudge is delivered) and they can measure behavior in real time (and hence adjust their nudging). Apples by the cash register are a far feebler nudge than a robot offering you an apple and recording a ton of data about times you take it and times you don’t.

At HRI I came upon researchers testing robot nudging — for example, to get people to wear masks, pick up litter and exercise. But the most striking robo-nudge I encountered was this "haunted desk" (pdf) developed by a team at Stanford’s Medical School. The desk will rise and sink throughout the day on a schedule its user can't control. The idea is to force the user to change position from sitting to standing through the workday.

Lawrence H. Kim and his co-authors call this "non-volitional behavior change," which, if you parse it, means "forcing people to do stuff they wouldn't do on their own." To me, that’s transparently bad for people's autonomy, sense of agency and even their dignity. But (surprise, surprise) the entire human race is not like me. Out of 16 people who did three tasks at the desk in a trial run, nine said they liked it, with comments like "I know I need to get up and down, but it is so easy to forget." (I wonder if some test subjects were saying what they thought the experimenters wanted to hear, but, still, 9 out of 16 is a clear vote in favor.)

I would not want a "haunted desk" — not least because the health benefits of a standing at a desk aren't as obvious as the authors suggest. But I’ve learned not to assume that I represent humanity when it comes to robot interaction. In my travels and reporting, every single digital device I've considered intrusive or oppressive has pleased someone who found it helpful instead.

That even includes the "Happiness Hat," an art piece by Lauren McCarthy, an artist and programmer who teaches in UCLA's School of the Arts and Architecture. It was a wool cap with a bend sensor which attached to the wearer's cheek, and a servo mechanism in the back of the hat. Attached to the servo was a metal spike, which dug into the user's head unless the sensor detected a smile (how much it dug was inversely proportional to how wide the smile was). The piece was intended as a satire, but McCarthy heard from people saying they were going to try the hat to cure their depression.

Which brings me to my next post-meeting take-away.

Different Robots for Different People

Perhaps because there are so many different kinds of robot, and so many different kinds of human, there isn't a one-size-fits-all, standardized formula for how robots and humans should interact.

At the HRI meeting I kept encountering reminders that robot design and testing in the past hasn't included many women, people of color, elderly, children, or people from non-wealthy nations. For example, Katie Winkle of the KTH Royal Institute of Technology in Stockholm, describing her project to figure out what a feminist robotics will look like, referred to the many AI assistants that have female voices, subservient mannerisms, and too much tolerance for abuse. In her talk Patricia Alves-Oliveira of the University of Washington noted that robots for children are usually designed without their input. She and her colleagues were presenting a process they've invented, using games and fun exercises, for getting children to design a robot they'd like to play with.

Different Ways of Designing Robots?

Some in the field believe it should do more than expand the pool of people on whom robots are tested. They say the lab-experiment model — develop the robot, test it in controlled experiments, be guided by the results of those experiments — is not sufficient to design robots that will help, support, sustain and delight their human partners. In her presentation, for instance, Maria Luce Lupetti of TU Delft in the Netherlands argued that robot-makers can and should benefit from "designerly ways of knowing" (both principles and ways of working) to "challenge what we believe a robot should look, act and be like." There is a difference, she said at the meeting, between engineering design (which I take to be getting the robot to do the task foreseen by its makers) and "design design" (which — I am kind of guessing here — is thinking originally about how the machine can serve human hopes and needs).

The Dawn of Cacorobotics?

One innovative way robots can relate to humans is by being annoying. Is there going to be a subfield of "cacorobotics" in the near future (from caco- a Greek derived prefix meaning 'unpleasant', as in "cacophony)? Maybe so, to judge by a number of presentations I saw in which robots irritated, ignored, insulted and ridiculed humans. (I'm not including the haunted desk, since, as I mentioned, a majority of the 16 people who worked with that robot actually liked it.)

As part of her explorations in feminist robotics, for example, Winkle and her colleagues created a talking head that responds to sexist insults by telling the insulter that he's a f*cking idiot. Winkle's experiments with school-age kids (which used milder language) and with teen-agers (who got the full four-letter treatment) showed that this aggressive talk is effective at curbing sexist sentiments in both boys and girls; much more effective, in fact, than the diplomatic language ("I won't respond to that") that Siri, Cortana and other default-female AIs usually use.

In another study, Daniel Rea of the University of New Brunswick and his colleagues presented a robot that gets longer and better effort out of people when it says things like "Is this all you can do?" and "are you even trying?" If a robot gets more of the response desired from people this way, is it a successful robot even if it makes people feel bad? (I'd go with "no," but I doubt everyone would agree).

Another reason to build cacorobots is to discover what not to do. Like the project described Hadas Erel and her colleagues from the Media Innovation Lab at IDC Herzliya in Israel. The researchers created a 3-player ball game for one human and two white tabletop robots (one rather like a globe and the other vaguely reminiscent of the friendly lamp in the Pixar logo). The game was set up so that the two little robots sometimes ended up keeping the ball to themselves as they batted it back and forth. Did the human participants laugh this off? They did not, instead reporting that they felt hurt and excluded. Something to watch out for if the robot-filled world of designers' dreams comes to pass.

This and That

Chinese researchers demonstrate a soft submarine robot that can swim in the crushing pressure of the deepest ocean, writes Carolyn Gramling at Science News, with a link to the paywalled Nature paper and cool video.

“Can a robot write a theater play?” asks this project to commemorate the centennial of R.U.R., the play that coined the word “robot.” (As usual with headlines that end in a question mark, the answer is “no.”)

I guess it had to happen eventually. Boston Dynamics’ Spot robot, once obtained only by lease to companies and governments, became available for outright sale for $74,500 last summer. So someone bought one and started a YouTube channel about her “robot dog.”