When I’m beginning a long project, I sometimes imagine a dense and tangled jungle. Conceiving the work, planning it, and selling it are like a helicopter flight over that place. It’s easy from up there to note the big features and come up with a general plan. But the actual writing, I know, will be a walk through that jungle – hacking away through the dark and mosquito clouds, tripping over this vine, getting lost on that trail.

In flight mode you can think “it’s an article about how robotics and large language models can be combined.” The hard trek, on the other hand, is struggling to describe how deep learning works in just the right way to fit this particular paragraph. Do I understand it? Am I explaining it in enough detail? Am I going into too much detail? Wait, did I already describe this elsewhere? Didn’t I have a better metaphor written down somewhere in all this? Does this make any sense?

The jungle trek takes longer and is overall less pleasant than the helicopter ride. On the other hand, if you don’t do the trek, you don’t know the jungle.

We Shouldn’t Shirk Experiences We Need to Thrive

Work that isn’t easy or glamorous or even particularly interesting is the only kind that leaves you with deep knowledge – of your subject, your craft, and your self. Those fruits of the hard trek, and the pride they inspire in work well done, are precious. We don’t want artificial intelligence or robots taking them away.

That’s why people have surprised tech executives repeatedly by reviling uses of AI that those tech executives wanted to sell us.

Look, said Apple, our thing (an iPad), with which you can point and click and virtually scribble, literally crushes all these laborious art-making tools, from paints to pianos to sculptor’s clay. (Apple hadn’t yet unveiled its AI offerings, but people rightly saw AI in the message.) Look, said Google, our thing (Gemini AI), can write this kid’s fan letter for her, so that all she has to do is tweak the words and hit send.

The corporate people who approved these ads must have thought the world agreed with them about ease and efficiency. But they were wrong.

It’s important, though, to spell out why they were wrong. Otherwise, we risk plunging into a confusion that tech companies can and will exploit to persuade people that they’re overreacting.

‘This Squicks Me’ Isn’t A Good Way to Judge

It’s only human to be repulsed, at first glance, by new things – especially if they seem to undermine relationships and conventions that give us comfort. In other words, when people say “that’s horrible” about a new use for a robot or an AI, they might just mean “that is not the way I have always done it, and it threatens my intuitions about the world.” In other words, this:

That’s a state of mind that, for obvious reasons, you don’t want guiding your decisions about AI.

So how do you filter out the temporary emotional flareups provoked by novelty and disorientation? How do you tell when AI is not just unfamiliar, but really and truly bad for people?

At the moment, I do it by reference to that metaphor of helicopter versus trek.

AI, obviously, is the chopper ride. You fly over the jungle of, say, “I’d love to give a speech about where AI should, and should not, be used for writing. I’d like it to sound formal and authoritative and to cite some good books.” And a Large Language Model responds with words that sound a lot like a human who has walked through every detail. You’ve saved time and effort, at the cost of learning and practice and self-esteem. In such a moment, AI’s version is worse than worthless. It’s robbing people of experiences they need to have.

So: How do we identify AI work that harms the people it’s supposed to assist? When does it need to be you, not your algorithmic agent, who walks through the jungle?

I When the Meaning of the Task Is That I Put in the Effort

First, there is work whose moral significance derives from that the fact that a person does it. When ChatGPT says “I apologize, but I’m not able to provide or find specific memes or images,” we know that no human being is excusing him or her self. It’s convention being obeyed. Imagine, though, that you told a more advanced AI agent “find out why Bjorn is mad at me and email him an apology for it.” The resulting email might be a lovely piece of work, but it too would be meaningless. For the apology to be real, it has to be your labor – figuring out your offense, and taking the trouble to express your regret.

This was the violation portrayed in Google’s ad. Written by a machine, the child’s fan letter became meaningless. The point of the letter was for the kid to sacrifice some time and effort to contact her idol. A machine-written version would be like a medal for a battle you didn’t fight in.

Second, there is work that teaches you something. In field after field, from coding to business consulting to legal research , it’s clear that current LLMs are not entirely trustworthy. You need to use your judgment, your fine feel for the work you do, to check and improve on their results.

But where are you supposed to develop that fine feel, except in walking through the jungle? As the great SF writer Ted Chiang put in this perceptive essay, “using ChatGPT to complete assignments is like bringing a forklift into the weight room; you will never improve your cognitive fitness that way.”

I’ve already written about Matt Beane’s extensive work on how people learn skills, so I won’t belabor this here. Briefly, he and other scholars have found that AI and robots, used to make work more efficient, really do deprive workers of the chance to practice their craft and develop expertise.

II When the Task Is Also a Lesson

So another area in which AI should not be doing scut work is any realm where the doing of that work teaches craft and skill. This, of course, is why teachers at all levels are frantic about students using AI to write and research. And why schools insist little kids do math on paper in the early grades. Otherwise they won’t understand what their calculators tell them. The same principle goes for AI.

And for robots as well. Sure, in some areas there is no argument against labor-saving. When I interviewed farm laborers for this article a few years ago, they told me a robot harvester made their work far less painful and tiring. But as robots become more adept, there is a risk that they’ll displace apprentices. In surgery, where medical residents get less time with patients due to robots, this is already happening.

III When the Task is to Create Art

A third kind of work that humans should not delegate to AI is the making of art. “The selling point of generative A.I.,” Chiang writes, “is that these programs generate vastly more than you put into them, and that is precisely what prevents them from being effective tools for artists.” Having AI do your writing, or painting, or composing, is like ordering a nice takeout dinner. You can enjoy it, but you didn’t cook it. It’s not yours.

This is the most (perhaps only) controversial criterion on my list. Many people don’t agree. Do they have a point? If ChatGPT can write a better poem than I can, why shouldn’t I let it? For the moment, it cannot, and the issue isn’t presenting itself. But suppose it could (as one day it might). If you let an LLM write the thing for you, Chiang writes,

it has to fill in for all of the choices that you are not making. There are various ways it can do this. One is to take an average of the choices that other writers have made, as represented by text found on the Internet; that average is equivalent to the least interesting choices possible, which is why A.I.-generated text is often really bland. Another is to instruct the program to engage in style mimicry, emulating the choices made by a specific writer, which produces a highly derivative story. In neither case is it creating interesting art.

You might object that the concerns of those who write novels, stories and screenplays don’t apply to other kinds of writing, and to other kinds of creative work.

This was part of the burden of this reply to Chiang by Matteo Wong, a staff writer at The Atlantic. Wong argues that the two criteria Chiang defines as art – lots of human-made choices and an intent to communicate something – are too narrow. “You do not need to demonstrate hours of toil, make a lot of decisions, or even express thoughts and feelings to make art,” he writes.

To a word guy like me, that sounds strange. And in fact the techniques and people Wong cites – “automatism, randomness, and chance,” Salvador Dali, Jackson Pollack, Joan Mitchell, Andy Warhol – come from the visual arts, not the written ones. Whatever vistas they may open up in visual media, “automatism, randomness and chance,” applied to words, yield ennui and nonsense, not art.

I’m not suggesting here that writing need be meat-and-potatoes obedient to today’s conventions. Experimentation is great! And randomness has been a part of writerly exploration. Philip K. Dick, for example, sometimes cast the I-Ching to decide plot questions. But Dick did not let the I-Ching decide which word came next. The move Wong celebrates – emptying the creative process of a thinking, feeling consciousness – doesn’t work in the medium of words.

Still, he makes an important point. After a new technology offers to do work that once had to be done by hand, artists will naturally experiment with it – but to see how it can serve them, not to give it their job. What happens if I cede control of this part of the process? In other words (my analogy, not Wong’s), what the I Ching was to Dick, ChatGPT could be to a novelist writing this morning.

I think Chiang is correct to say that a story written by ChatGPT is not and cannot be art. But perhaps Wong is right to suggest that in the future most “AI art” won’t be like that. That, instead, it will be made by people who used AI in some way, but still made their art themselves. People who are still walking through the jungle of creation.

In that jungle, of course, the lines will be blurry. Maybe AI drafts the first version of a letter, then you spend an hour making it your own. Maybe you’re tongue tied as you approach the blank page that will be your fan note, and AI’s bland version gets you started on your own. Maybe you’re not sure about the conventions of a detective story, and AI’s outline starts you thinking more clearly. I don’t think any of those instances would violate the three principles I’ve described here. You, though, might not agree.

A Word About Having No Talent Whatsoever

And what about instances in which you aren’t actually trying to do anything creative? Maybe you, like me, you have no talent for drawing whatsoever. Maybe, like me, you often astonish visually sensitive people with the things you don’t notice (oh, right, now that you mention it, I do see that the walls are a different color and the table has moved).

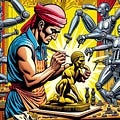

Such am I. So I am not capable of creating anything approaching a good illustration for these posts. And I can’t afford to hire an illustrator. So I use AI to generate an image that roughly represents my idea of a good illustration for a post. I am so far from being able to draw, paint or photograph that this product is not any kind of substitute for my own efforts. When you have zero capability to make a thing, it’s either AI or nothing.

Still, I have mixed feelings about these AI images. I don’t want to fall into the false but flattering notion that, thanks to AI, I can do anything. Because what is really the case is that, thanks to AI, I can order up some things that I like well enough, and can use. Instead I try to remember that there are things I do fairly well; things I can learn; and things I should not attempt. I don’t mind delegating work in this third category to AI.

I think it is fine, as long as I don’t let the AI habit blur the lines. If I can’t walk the jungle, give the me the chopper ride. Trust me to remember that I’m just a tourist flying over.1

So this is my list, at the moment anyway. Don’t use AI when the meaning of the product is that you did work. Don’t use it if it deprives you of a chance to learn or practice something. Don’t use it to do your line-by-line jungle-hacking work of making art.

Maybe my rules of thumb are too strict; maybe there are more or different principles to be articulated. But it’s important as AI hype rolls on to recognize two things: There is work we don’t want AI to do, and we each need to figure out what that work is.

A separate and immense problem that should never go unmentioned: AI image-generators (and text-generators and music generators work because they’ve been trained on the creations of humans. These people have been rewarded with precisely nothing for the labor they’ve unintentionally provided to guide the algorithms.